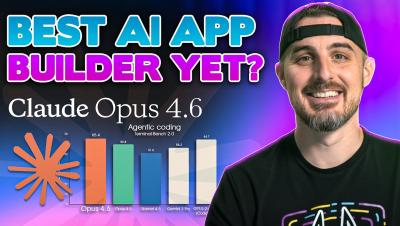

I Built a Production-Ready App in 20 Minutes with Claude Opus 4.6

My boss dropped a bombshell at 4:00 PM: build a secure, production-ready app from scratch by tomorrow morning. Instead of panicking, I put Claude Opus 4.6 to the test. In this video, I walk you through the entire end-to-end process of using an AI agent to architect, code, and debug a full-stack application. We’ll look at "Plan Mode," how the AI handles environment errors (like Windows SQLite issues), and most importantly, how we verified the AI's code for security vulnerabilities using Snyk.