How multi-agent systems work in LimaCharlie

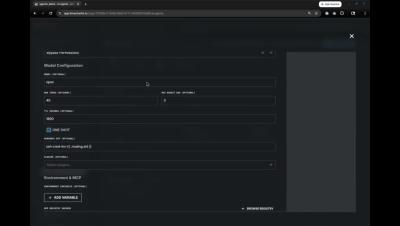

This video walks through how single agents and multi-agent systems are built and run inside the LimaCharlie platform. Agents in LimaCharlie are defined declaratively. Each agent specifies the model it runs, its instructions, the tools it can access, what events trigger it, and the guardrails it operates under. This approach makes agents version controllable, reviewable, and portable across tenants.