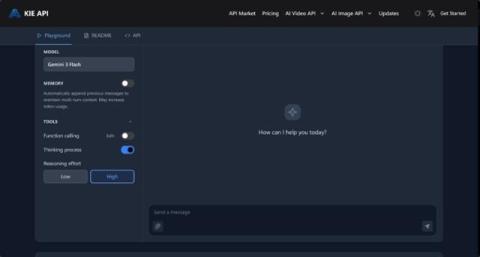

Building Smarter Virtual Assistants with Gemini 3 Flash API: AI for Seamless Workflow Automation

As teams become more distributed and workloads continue to increase, the need for effective automation tools has never been greater. Traditional methods of collaboration often fall short when it comes to handling repetitive tasks, managing high volumes of information, or providing real-time, intelligent support. That's where AI virtual assistants come in, changing how teams collaborate, streamline workflows, and boost productivity.