Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

The Use Of AI In Cybersecurity - Consultants Roundtable || Razorthorn Security

How Can AI Improve Cyber Security?

Right now, organisations using AI cybersecurity tools like SenseOn can improve their cybersecurity in three core ways: But, in the future, one of the most significant benefits of AI will be its ability to protect organisations from….AI. To see why, let’s jump into a time machine.

Why you need a security companion for AI-generated code

Everyone is talking about generative artificial intelligence (GenAI) and a massive wave of developers already incorporate this life-changing technology in their work. However, GenAI coding assistants should only ever be used in tandem with AI security tools. Let's take a look at why this is and what we're seeing in the data. Thanks to AI assistance, developers are building faster than ever.

From Bits to Bots: Generative AI's Role in Modern Cybersecurity

The Role of AI in Your Governance, Risk and Compliance Program

In today’s rapidly evolving business landscape, organizations face an ever-increasing array of risks and compliance challenges. As businesses strive to adapt to the digital age, it has become imperative to enhance their Governance, Risk Management, and compliance (GRC) strategies. Fortunately, the fusion of artificial intelligence (AI) and GRC practices presents a transformative opportunity.

Navigating the Complex AI Regulatory Landscape - Transparency, Data, and Ethics

Ahead of the upcoming AI Safety Summit to be held at the UK’s famous Bletchley Park in November, I wanted to outline three areas that I would like to see the summit address, to help simplify the complex AI regulatory landscape. When we start any conversation about the risks and potential use cases for an artificial intelligence (AI) or machine learning (ML) technology, we must be able to answer three key questions.

Most Organizations Believe Malicious Use of AI is Close to Evading Detection

As organizations continue to believe the malicious use of artificial intelligence (AI) will outpace its defensive use, new data focused on the future of AI in cyber attacks and defenses should leave you very worried. It all started with the proposed misuse of ChatGPT to write better emails and has (currently) evolved into purpose-built generative AI tools to build malicious emails. Or worse, to create anything an attacker would need using a simple prompt.

How to Choose Effective AI Tools for Cyber Security In 2023

If you are searching for ways to actualise benefits from cybersecurity AI tools or want to find out what AI tools will really make a difference in your SOC, you’re not alone. A World Economic Forum survey last year showed that almost half of all security leaders thought AI and machine learning would have the greatest influence on stopping cyber attacks and malware in the next two years. And that was before ChatGPT started an AI frenzy.

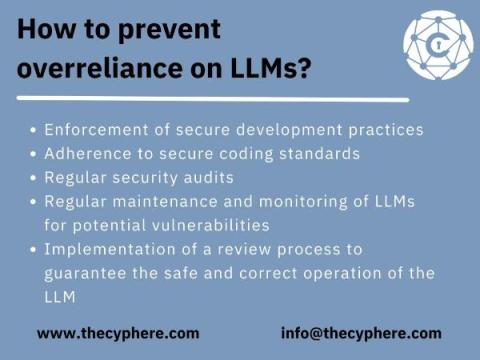

OWASP Top 10 for Large Language Models, examples and attack mitigation

As the world embraces the power of artificial intelligence, large language models (LLMs) have become a critical tool for businesses and individuals alike. However, with great power comes great responsibility – ensuring the security and integrity of these models is of utmost importance.