Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

Securing AI Data with Protecto Privacy Vault

Meet Lookout SAIL: A Generative AI Tailored For Your Security Operations

Today, cybersecurity companies are in a never-ending race against cyber criminals, each seeking innovative new tactics to outpace the other. The newfound accessibility of generative artificial intelligence (gen AI) has revolutionized how people work, but it's also made threat actors more efficient. Attackers can now quickly create phishing messages or automate vulnerability discoveries.

AI's Role in Cybersecurity: Black Hat USA 2023 Reveals How Large Language Models Are Shaping the Future of Phishing Attacks and Defense

At Black Hat USA 2023, a session led by a team of security researchers, including Fredrik Heiding, Bruce Schneier, Arun Vishwanath, and Jeremy Bernstein, unveiled an intriguing experiment. They tested large language models (LLMs) to see how they performed in both writing convincing phishing emails and detecting them. This is the PDF technical paper.

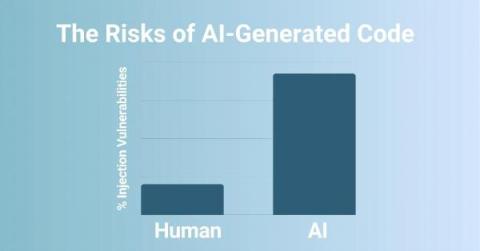

The Risks of AI-Generated Code

CISO Global Receives $49 Million Valuation for Argo Edge Cloud Security Platform

5 Intriguing Ways AI Is Changing the Landscape of Cyber Attacks

In today's world, cybercriminals are learning to harness the power of AI. Cybersecurity professionals must be prepared for the current threats of zero days, insider threats, and supply chain, but now add in Artificial Intelligence (AI), specifically Generative AI. AI can revolutionize industries, but cybersecurity leaders and practitioners should be mindful of its capabilities and ensure it is used effectively.

WormGPT and FraudGPT - The Rise of Malicious LLMs

As technology continues to evolve, there is a growing concern about the potential for large language models (LLMs), like ChatGPT, to be used for criminal purposes. In this blog we will discuss two such LLM engines that were made available recently on underground forums, WormGPT and FraudGPT. If criminals were to possess their own ChatGPT-like tool, the implications for cybersecurity, social engineering, and overall digital safety could be significant.

The Risks and Rewards of ChatGPT in the Modern Business Environment

ChatGPT continues to lead the news cycle and increase in popularity, with new applications and uses seemingly uncovered each day for this innovative platform. However, as interesting as this solution is, and as many efficiencies as it is already providing to modern businesses, it’s not without its risks.

Limitations of a single AI model

Today’s discussions on AI are often affected by emotions like fear and awe, as well as the fact that AI is reduced to large language models (LLM) — but this isn’t the entire story. So, let’s step back from these fears and dig into the data explaining why AI is richer than LLMs.