Can threat actors make ChatGPT malware? #ai #cybersecurity #gpt5

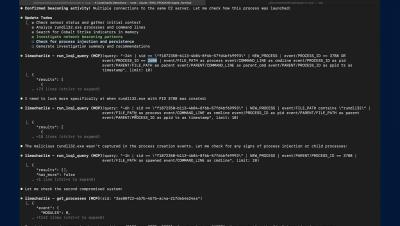

GPT-5 was jailbroken in under 24 hours using simple "storytelling" techniques that bypass safety guardrails. The key insight from our podcast? Individual AI requests appear legitimate but become dangerous when combined. Bad actors can request network code in one session, convincing emails in another, and credential collection forms in a third. Each task seems normal individually, but together they form a complete phishing toolkit.