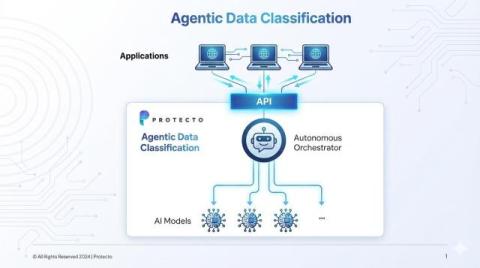

Agentic Data Classification: A New Architecture for Modern Data Protection

In the evolving landscape of data protection and compliance, data classification is the bedrock of safe AI workflows. Yet legacy approaches rely on singular models that are fixed, rigid, and limited in context. Our agentic data classification approach reshapes this paradigm by not relying on any single model. Instead, we orchestrate a dynamic, intelligent layer that automatically selects the right model for the job.